While the early 1960s saw the first experimental CAD systems such as Sutherland’s Sketchpad, Itek’s Electronic Drafting Machine and General Motors DAC-1, the latter part of the decade saw a burst of research activity that laid the foundation for the eventual commercialization of this technology. Obviously, dedicated mainframe computers such as the TX-2 used by Sutherland would not be practical for commercial systems. Likewise, given the limited processing power of the then available computers, much of the graphics processing needed to be offloaded to the display terminals themselves as well as the memory for refreshing displayed images. This period of time saw the development of three important devices that eventually spurred the introduction of commercial CAD systems – the storage tube display, the minicomputer and the tablet.

Development of the tablet as a light pen alternative

As interest in computer graphics began to grow, so to did the interest in developing a low-cost graphical input device to replace the light pen. The latter device was somewhat expensive and required users to keep their hands raised while working. Although the light pen had the advantage of being able to directly select a displayed item, many researchers felt that it was an awkward way to work and began to look for an alternative technology, especially one that might be less expensive.

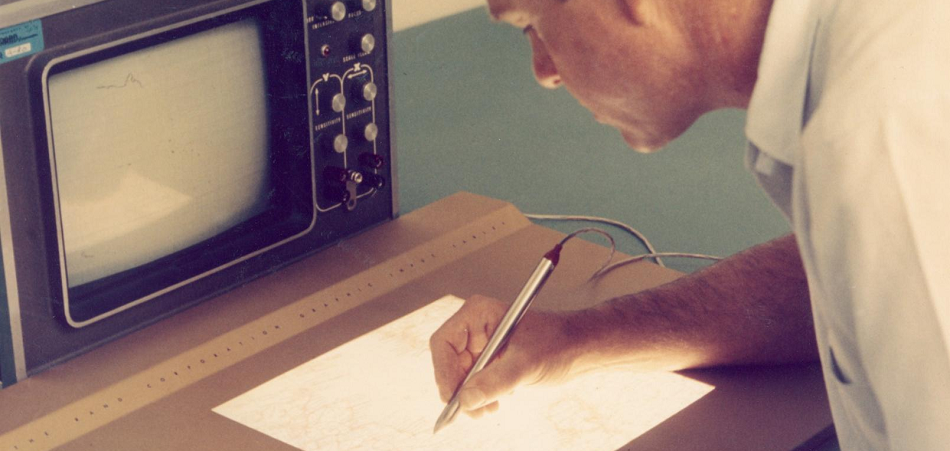

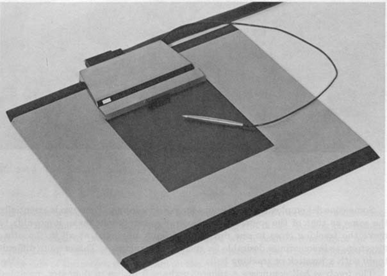

One of the first indirect pointing devices was the RAND tablet developed by Santa Monica, California-based RAND Corporation in 1964 working under a contract from the Department of Defense’s Advanced Research Projects Agency in Washington. This device had an active surface 10.24 inches by 10.24 inches with a resolution of 100 lines per inch. The tablet was actually a large circuit board with X axis resolution lines in one direction and Y axis lines in the other. As the user mover a stylus across the surface of the tablet, the position in both X and Y directions was sensed and fed to the computer controlling the graphics display device.[1]

Initially, tablets provided a low cost alternative to the light pen, but, eventually, they proved to be invaluable when storage tube displays were introduced since a light pen could not be used with a storage tube display. At first, RAND simply provided copies of its tablet to other research organization but eventually it was commercialized by RAND and by other companies.

Additional development work was also underway at MIT’s Lincoln Laboratory where Larry Roberts created an experimental pointing device that functioned in threedimensional space using four ultrasonic sensors. While the technology was workable, the concept never really caught on with users and few three-dimensional pointing devices were manufactured.

Another device developed at Lincoln Lab around the same time was a hardware comparator that would take an X/Y coordinate location entered from a tablet device and determine the graphic element being pointed at without requiring the application program to examine the entire database looking for the displayed element nearest this location. It did this by recording the coordinate values of the location pointed at and then determining if any displayed vector or point went through that location. (I assume that it utilized a small area around the specific coordinate values in question) and when it found a match, caused a hardware interrupt so that the display item being pointed to could be identified.

According to Roberts, “With comparator hardware for the central display generator, position-pointing devices are preferable to light pens because there is no need to track them; the pointing is more precise, and they will work with long persistence phosphors.”[3] With storage tube display terminals starting to become available around this time, the need for an alternative to the light pen was a major issue and the tablet appeared to provide that alternative. The need for a hardware comparator to identify displayed graphical elements faded as low cost computers with high computational capabilities became more prevalent. Consequently, hardware-based graphical comparators never became a key component of commercial CAD systems.

One problem associated with tablets was that they required a considerable amount of computer processing to track the stylus and convert hand movements to graphical input the host computer could understand. With the advent of time-sharing, host computers were being used simultaneously by multiple users and the resources were not available to support a significant number of graphic terminals equipped with tablets.

System Development Corporation, a government contractor in Santa Monica, California, addressed this problem by placing a Digital PDP-1 between the graphic terminals and the host time-sharing computer. The PDP-1 took on the task of tracking stylus movement and providing data to the application program running on the host when the user initiated or completed a movement of the stylus such as defining the beginning or the end of a line. Pen movements were sampled by the PDP-1 250 or 500 times per second. At the higher rate, tablet support used about 30% of the PDP-1’s computing capacity.[4]

While the Rand Tablet used a grid of fine lines (1,000 by 1,000 or about 100 per inch) through which signals flowed, other techniques were also promoted. Sylvania Electronic Systems introduced the Sylvania Data Tablet Model DT-1 which used an analog technique to determine the stylus position. The Sylvania unit could also provide a rough measure of height above the tablet as well as having several other interesting features such as being transparent so the tablet could be placed over a CRT screen. The DT-1 also used a pen for its stylus which enabled the user to create a hard copy of information being sketched. While these were all interesting features, users were either uninterested in them or were unwilling to spend the extra money for these capabilities and the device never caught on.[5]

Among the advantages the tablet had over the light pen was the fact the when the pen was held against the surface of the CRT, it physically blocked what the operator was pointing to. To get around this limitation many software programs used the concept of a “pseudo point” that was a displayed cursor offset slightly from the exact spot the operator was pointing to. Typically, the pseudo point would be slightly above the light pen’s physical location. The application software would interpret the pseudo point as representing the coordinates the operator was actually interested in, whether it was to select an existing element or to define a new point on the screen.

1966 Wall Street Journal article

One of the major issues that has plagued the CAD industry during its entire history has been the lack of public recognition for what this technology can accomplish and the positive impact it has had on design and manufacturing productivity. One of the few early articles about CAD in the general press appeared in the October 25, 1966 issue of the Wall Street Journal. This front page article was titled “Engineers Focus Light On Screen to Design Visually Via Computer.”

Written by Scott R. Schmendal, it started off: “Scientists and engineers who like to work out difficult problems by using sketches can dispense with such traditional tools as chalk and pencil. A major advance in computer technology is beginning to give them a major new tool – a TV-like screen linked to a computer, on which sketches can be made using beams of light.”[6] Schmendal used automotive design analogies extensively in his article which was particularly well written given the overall newness of computer technology, much less interactive graphics. The article described how sketches of an automobile could be created, edited, and rotated to different viewing orientations. This was done in a very straightforward manner.

A substantial portion of the article talked about the expected future ability of these graphics systems to create control tapes for NC machine tools. “…the day is fast approaching when the computer will be able, for example, to analyze a gear drawing on the screen and automatically turn out a coded tape that will describe how the part is to be manufactured.”[7] At the time, part programming was still an extremely time-consuming process that was typically done manually. The author quoted S. H. (Chase) Chasen, who at the time was the head of computer graphics research at Lockheed-Georgia, describing the current state of NC development at that company.

Lockheed had generated experimental NC tapes the previous November and was planning to produce production parts for the C5A aircraft before the end of 1966. Chasen described the expected payoff for this application by comparing the new technology to that used with building the C141 plane where it took an average of 60 hours to prepare each control tape for the 1,500 parts produced using NC machine tools. Chasen was quoted as stating: “Our initial estimate was that computer graphics would reduce that average to about 10 hours but now we think that the savings may be even greater.”[8] This work at Lockheed is described in greater depth below.

The article went on to list a number of companies doing research in applying computer graphics to engineering design including IBM, Control Data Corporation, Bunker-Ramo, General Electric, Sperry Rand, Information Displays and Scientific Data Systems. The article’s description of graphics activity at GM was somewhat vague while its discussion of IBM’s work in this area was more descriptive. IBM had introduced the 2250 graphics terminal as a System/360 peripheral device in April, 1964. By the date of the Wall Street Journal article, the company had delivers “dozens” of units and had orders from “hundreds” of customers for more units, about equally split between industrial and defense-aerospace users.[9] It is entirely possible that IBM meant to say that it had orders for hundreds of additional units rather than hundreds of customers. IBM was also reported to have introduced an improved version of the 2250 priced at $76,800 each.

Schmendal credits Ivan Sutherland, with creating much of the interest in computer graphics as an engineering design tool. After completing his work on Sketchpad and obtaining his Ph.D. in electrical engineering in 1963 , Sutherland had taken a job with the Department of Defense Advanced Research Projects Agency as director of information processing techniques which was followed in 1966 with a stint as a professor at Harvard University. The final section of the article predicted that time-sharing would have a major impact on reducing the costs of using computer graphics.

One of the more interesting aspects of this article is that Schmendal quotes a graphics survey done by Adams Associates based in Cambridge, Massachusetts which stated: “A substantial increase in the use of CRT (cathode-ray tube) display devices is now in the making.” This survey may well have been a precursor of the periodic report the company later published called the Computer Display Review of which I had a major hand in initially producing and personally wrote the section on display technology.

Computer graphics developments

The latter half of the 1960s saw significant progress in the development of computer graphics devices, both line drawing refresh displays and storage tube displays. Point display refresh devices were still being used because of their relatively lower cost but they were starting to slip out of favor for the most part in that they simply did not result in the quality images required for engineering design work or similar applications.

Storage tube displays are discussed later in this chapter.

One of the major issue involving refresh display devices was that the image on the CRT screen had to be redrawn or refreshed 30 or more times per second in order to avoid having the image flicker. Initially this was accomplished by having the host computer feed the data to the display device vector by vector. In some cases this was done by continually regenerating vector information from a data file describing the data. The computer would determine how much of the image was to be displayed and clip any vectors that fell outside the viewing area. This type of operation ate up a considerable portion of the host computer’s computational capacity.

One way around the problem and in order to enable the host computer to support multiple display devices simultaneously was to offload as much of this work as possible from the computer to the terminal. The technique used was to equip the display terminal with its own memory and a computer-like processor that would store the data to be displayed and continually regenerate the image on the CRT. Called a “display list,” the idea to do this had been around for several years and was not particularly novel.

The display processor continually regenerated the displayed image from the data stored in the display list without placing any computational requirement on the host computer. What was imaginative was to provide these display processors with a subroutine capability. Multiple copies of a symbol could be displayed even though the display list contained just a single copy of the graphical elements defining the symbol. The display processor would treat that single copy as a subroutine much like a programmer would in a host computer program.

Academic research centers, commercial companies and government laboratories were all working on developing display list processors. One early such project underway at the National Bureau of Standards was described in an article by D. E. Rippy and D. E. Humphries: “MAGIC – A Machine for Automatic Graphics Interface to a Computer” which appeared in the “Proceedings of the 1965 Fall Joint Computer Conference.”[10] The key issue in the development of devices such as MAGIC was to establish the proper balance between hardware and software functions.

Gradually, display devices took on more and more of the tasks required to generate displayed images. This included adding capabilities such as clipping the portion of images that fell outside the CRT screen, circle and arc generators and character generators. Rather than have software on the host computer define the individual elements of different line styles (short dashes, long dashes, dim, bright, etc.) each element in the display list could be coded for line type and the display processor would create the image accordingly.

Another group working on developing a graphic terminal that would place a nominal computing load on a host computer was Bell Telephone Laboratories in Murray Hill, New Jersey. Called GRAPHIC 1, the system they put together in 1965 consisted of a Digital PDP-5 minicomputer and a Digital Type 340 Precision Incremental Display as shown in Figure 4.2. The PDP-5 was a 12-bit machine with a 4,096-word memory and 18 microsecond add time but no hardware multiply/divide capability. It did have very flexible input/output features. The 340 used an incremental point display method which significantly limited the complexity of an image.

According to William Ninke, about 200 inches of line drawing or 1,000 characters could be displayed flicker free at 30 frames per second. Once an image was communicated to the GRAPHIC 1, it was stored in the PDP-5’s memory and the image continued to be refreshed without any further action on the part of the host computer. A variety of input devices were attached to this research system including a light pen, keyboards, toggle switches, potentiometers and a two-dimensional track ball. Access to an application program on the larger machine was required in order to make changes to the displayed image. At Bell Lab the host computer was a batch-oriented IBM 7094 that provided access to the Graphic 1 at intervals ranging from every two to six minutes. This was not conducive to real-time applications and it demonstrated the need for commercial mainframe systems that could operate in a more interactive time-sharing mode.[11]

One subject the researchers at Bell Labs focused on was the relative benefits of using a light pen versus the recently developed Rand tablet. The primary tradeoff was the light pen’s ability to identify displayed objects given appropriate interface hardware and software as compared to the need to search the graphic database to find the item being indirectly pointed to with the tablet.

Ninke stated that they found the light pen to be preferred over the tablet. There may have been two characteristics of the GRAPHIC 1 system that led to this conclusion. First, the PDP-5 was not a particularly fast computer and without a hardware multiply and divide capability it would have been time-consuming to find the item the user was pointing to with the tablet stylus. Second, the amount of information that could be displayed on the GRAPHIC 1 screen was quite limited, making it easier for a light pen to distinguish between displayed elements. If a faster computer and/or a display with greater image capacity were used as part of the console configuration, the relative merits of the two devices might have been perceived differently.

Within two years, Bell Laboratories made substantial progress in developing interactive graphics tools. The IBM 7094 was replaced by a GE 645 mainframe computer that was specifically designed to support time-sharing software and multiple remote devices. The 645 had a memory of 256K 36-bit words and was approximately twice the speed of the 7094. Two types of graphics terminals were attached to the 645. One set, called the GLANCE system, provided view only graphics to time-sharing users whose primary device for data entry was a teletype terminal. Of more interest to our story was a new interactive terminal called the GRAPHIC 2. The PDP-5 used with the GRAPHIC 1 was replaced by a Digital PDP-9, a machine that was nearly an order of magnitude faster while substantially less expensive. The same basic architecture was used, however. Graphic files were downloaded from the host 645 computer over a 201 Dataphone modem at 2,000 bits per second, stored in the PDP-9 core memory and then used to refresh the CRT display. Interaction continued to use a light pen.

According to a paper presented at the 1967 Fall Joint Computer Conference by Carl Christensen and Elliott Pinson, response time for the new equipment was measured in seconds as compared to minutes for the GRAPHIC 1. For the most part, the software developed by Bell Laboratories during this time period provided basic data management and graphical services but did not go as far as complete applications that could be used for interactive engineering design.[13]

Other research groups looked for ways to reduce the amount of data that had to be transmitted to a remote graphics terminal being used in a time-sharing environment. Since communication lines in the mid-1960s typically operated at speeds as low as 300 bits per second, it could take an painfully long time to sent an image to the remote terminal if had to be done point-by-point or as a series of short vectors. This was becoming an increasingly serious problem as graphic applications were beginning to deal more frequently with curved lines as well as with storage tube displays which required that the entire image be redrawn whenever a graphical item was moved or deleted. MIT’s Project MAC developed a prototype system that reduced the amount of data needed to transmit curvilinear entities to only a few characters per line.[14]

Development work on stand-alone and remote graphic terminals was also underway at IBM’s facility in Kingston, New York. The company interfaced one of its 2250 Model 4 displays to an IBM 1130 computer. The 2250 had originally been introduced as an IBM System/360 peripheral and was only used with other computers on an infrequent basis. The 1130 had superceded the IBM 1620 in 1965 and was a popular machine for engineering applications. It was not particularly fast by the standards of the late 1960s with an add time of just eight microseconds. A Digital PDP-9 which sold in the same price range was nearly four times faster.

IBM personnel made several modifications to the 1130 to support the 2250 display and created software to support interactive operations. One key characteristic of this system was that the actual 2250 display commands were stored in the 1130 core memory and fed out to the display using a cycle stealing method. In effect, when the display needed the next command, its request to the computer for that data took precedence over all other computer operations, but it did so in a totally transparent method. At 40 frames per second, this system could display the equivalent of about 2,100 inches of line drawing on the unit’s 21-inch display.

It appears that the primary intent of the 1130-based system was to act as a graphic interface for engineering programs being run on an IBM System/360 host. The unit was able to communicate with a host computer at speeds ranging from about 150 characters per second up to 30,000 characters per second. The latter was a very high data transfer rate for that era and probably would not have been economically viable for most engineering design organizations. There is no record that I have been able to find that indicates IBM ever developed commercial CAD software for the combination of the 1130 and 2250 nor does it appear that the company actively sold this type of configuration for others to develop that type of software.[15]

The growth of commercial display terminals

A number of companies were started during the 1960s to produce commercial refresh-type computer graphic terminals including Information Display Incorporated, Adage, Evans & Sutherland and Imlac. In addition, several of the computer manufacturers also entered this market including IBM, Control Data Corporation and Digital Equipment Corporation. Many of these display products required a computer system to provide the memory for refreshing screen images while others included the refresh memory in the display controller. Some had line, circle and character generators built into the base product while others sold these modules as options.

Computer display terminals of the late 1960s were not cheap. A typical system interfaced to a minicomputer sold for $45,000 to $120,000. A major element of the cost was in the graphics controller, rather than in the CRT display itself. As a consequence, most vendors came up with configurations that allowed users to attach multiple displays to a single controller which in turn was interfaced to a computer system. Some products, such as CDC’s Digigraphic units and IBM’s 2250, only interfaced to those companies own computers while the non-computer vendors stressed the ability of their units to interface to a wide range of different computers.

Perhaps the highest performance graphics terminals available by late 1968 were the AGT units being sold by Boston-based Adage. In a paper presented at the 1968 Fall Joint Computer Conference, Adage personnel pointed out that to dynamically scale and rotate graphical data in three-dimensional space would require the execution of one million instructions per second for an image containing 1,000 vectors and a refresh rate of 40 frames per second. Many of these instructions would be time-consuming multiply operations. While large host computers were capable of this level of performance, tying up such a machine to support interactive graphics was not economically feasible.

The Adage terminals not only incorporated a 30-bit minicomputer but also utilized a custom-designed display processor that was capable of translating and rotating up to 5,000 vectors at 40 frames per second. This device executed 16 specialized multiply operations and 12 adds in less than four microseconds. The company’s AGT10 was designed to support two-dimensional operations while the AGT30 and the AGT50 targeted three-dimensional applications. While the results were spectacular to view, the equipment was far too expensive to be practical for large volume CAD operations. Most of the units the company built were used in specialized applications. It was not until Adage began to build less expensive terminals that were plug compatible with IBM 2250type displays that the company generated reasonably decent revenue.[16]

The emergence of low-cost terminals

While vector refresh terminal technology was evolving at a rapid rate during this period, the cost of a typical unit was excessive for most applications. Although several commercial CAD systems, including CADAM and CATIA, would continue to use vector refresh terminals until the mid-1980s when IBM introduced the 5080 raster display, a lower cost alternative was obviously needed. The answer in the late 1960s was the storage tube display.

Storage tube CRTs had been around for several decades. Originally developed as the display component for oscilloscopes, they were also used as memory storage devices in early computers such as MIT’s Whirlwind I. As an interactive display device, they had a significant cost advantage over refresh displays but suffered from the fact that when any changes were made to the image being displayed, the entire screen had to be erased which took a large fraction of a second and then the entire image had to be regenerated. The latter step could take several minutes for a moderately complex image, especially if a serial interface was being used. While other companies built storage tubes, Tektronix was by far the leading producer since that company was also the world’s leading manufacturer of oscilloscopes. The company was rather slow, however, in appreciating the applicability of the storage tube as a low-cost display terminal.

Early developments involving the use of storage tube displays took place at MIT’s

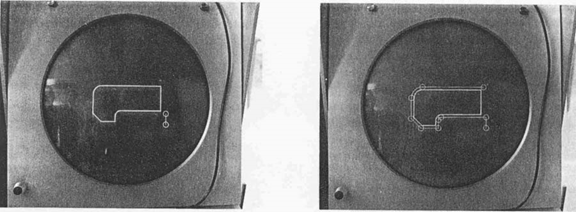

Project MAC. This led to the establishment of three commercial companies in the Cambridge area, Computer Displays, Computek and Congraphic. Computer Displays was founded by Rob Stotz and Tom Cheek and several other researchers from MIT’s Electronic Systems Laboratory. The company’s first product was called the ARDS (Advanced Remote Display Station) terminal.

Computek was founded by Michael Dertouzos who went on to become the long time director of MIT’s Laboratory for Computer Sciences until his death in 2001. The Computek Series 400 machines used an 11-inch diameter storage tube display and ranged in price from $6,700 to $11,200. Tablet input added $3,700 to $5,600 to the price tag. These units incorporated both curve and symbol generation capabilities as well as line generation with 1024 by 800 screen resolution.

Conographic was founded by Luis Villalobos who subsequently became a venture capitalist in California. The Conograph/10 provided a limited refresh capability in addition to the stored image. Up to 50 inches of line could be displayed in this refresh mode, a capability which significantly improved user interaction. This was a fairly sophisticated unit with 2048 by 1520 viewable resolution, a circle generator and a very advanced curve generator that required perhaps a tenth of the data to display a curve that other system required. A fully configured unit sold for around $17,000. While higher priced than the Computek Series 400, the additional functionality and resolution probably justified the extra cost.

Eventually, Tektronix realized that there was money to be made selling terminals in addition to simply selling storage tube modules on an OEM basis. The company’s first terminal product was the Model T4002 which was a fairly basic device with 1024 by 1024 resolution on an 11-inch diagonal screen. Although the unit included line and character generators, it did not include a circle, curve or symbol generator. A typical unit sold for about $10,000. Tektronix had a much larger sales organization than Computer Displays, Computek or Conographic and fairly quickly became the dominating vendor in the low-cost storage tube terminal market. At the same time, it continued to sell storage tube displays on an OEM basis to any company that wanted to build its own terminals.

Along with the T4002, Tektronix also introduced the 4601 Hardcopy Unit. This device copied whatever was displayed on the terminal’s screen without necessitating any computer processing of the data. As a consequence it was far faster than a plotter but the image size was just 8 ½ by 11-inches. The paper had a silver emulsion on it that faded over time but these copiers were a fast way to get a quick copy of whatever you were working on. Over the years, Tektronix would sell a huge number of screen copiers and a tremendous volume of the paper they used. It was much like Hewlett-Packard’s current market position with inkjet cartridges.

The minicomputer becomes a key technology building block

Most of the early graphics research was done on large mainframe computers such as the TX-2 at Lincoln Laboratory or the big IBM System/360 machines used at General Motors and Lockheed. While a reasonably economic system could be configured if one of these machines could handled a moderate number of terminals, they were not amenable to fostering a commercial CAD industry. Basic mathematical instruction speeds were too slow to support more than a few terminals and the initial cost of a system was far too high.

One exception was the Electronic Drafting Machine developed at Itek and which later formed the basis of Control Data’s Digigraphics system. As described in Chapter 6, a Digital Equipment Corporation PDP-1 was initially utilized. One problem with early minicomputers was that comprehensive real-time operating systems were not available and application programmers were required to provide many of the basic capabilities that we take for granted today.

By 1964, several companies besides Digital including Scientific Data System and Computer Control, were selling relatively low cost computer that were applicable to interactive graphics applications. Over the next five years, this portion of the computer industry underwent significant growth and the number of 16 to 24-bit machines that could support interactive graphic applications expanded rapidly. New vendors for this type of machine included Computer Automation, Control Data, Hewlett-Packard, Honeywell which had acquired Computer Control, IBM, Interdata and Systems Engineering Laboratory. On the other hand, many of the larger computer manufacturers such as General Electric, Burroughs and Univac basically ignored this emerging market.

One of the most significant product introductions was Data General’s launch of the 16-bit Nova in January 1969. Ed deCastro, the founder of Data General, previously worked at Digital as a senior hardware design engineer and was involved in designing a new 16-bit minicomputer called the PDP-X. When Digital decided to pass on deCastro’s design in lieu of another plan for what eventually became the PDP-11, he left and started his own company to build his version of the PDP-X. The Nova was the company’s first product. This machine was aggressively priced and was well tuned for interactive applications. The early versions were not particularly fast, however, with an add time of 5.9 microseconds, the equivalent of less than 0.2 MIPS.

The Digital PDP-11 was the minicomputer along with the Nova that really energized the early CAD industry. First shipped in early 1970, it was also a 16-bit machine. A basic system with a 4K (words not bytes) memory sold for $13,900. Additional memory was $4,500 per 4K words or the equivalent of $562,500 per megabyte. (Thirty-five years later memory sells for less than $0.10 per megabyte.) Digital would continue making this machine in one form of another until well into the 1990s. The initial operating systems for both machines were fairly basic and early CAD software developers were forced to provide most of this functionality themselves. The combination of a low cost minicomputer with 8K of memory, an 11-inch storage tube display and a tablet resulted in an economical hardware configuration for emerging CAD system vendors.

Spreading the word

In 1965, Adams Associates, a consulting firm in Bedford, Massachusetts, began publishing a compendium of commercially available graphics terminals called the Computer Display Review. One of the significant characteristics of this publication was that the authors defined three test cases – a schematic diagram, an architectural floor plan and a weather map – and used these test cases to determine the time it would take to display each image based upon the manufacturers’ specifications.

An overview of then current graphics technology written by Carl Machover was published in the proceedings of the 1967 Fall Joint Computer Conference held in Anaheim, California. Machover, who is one the graphics industry’s most respected consultants, was the vice-president of Information Display Incorporated at the time. While the paper did not provide extensive details about the different commercially available products being marketed at the time, it did contain an interesting list of 16 companies manufacturing display hardware. Except for Bolt, Beranek & Newman (now called BBN Technologies), IBM, and International Telephone & Telegraph (now called ITT Industries), none of these companies are still in business and only IBM is currently manufacturing computer products used for CAD applications.

The others, including Bunker-Ramo, Information Displays, Philco-Ford, and Scientific Data Systems, are all long gone, having been merged into other companies or simply closed down. All the systems sold by these manufacturers were random positioning refresh displays except for BBN which was one of the first to offer a storage tube device and Philco-Ford which had one of the first raster displays.

There are several significant aspects to Machover’s paper.

- The first was a discussion about the differences between electrostatic and magnetically deflected CRTs. The trend by 1967 was to use either a combination of the two types of deflection or a dual magnetic deflection setup that utilized a lower inductance coil and a higher inductance coil.

- The paper covered in depth what was meant by “flicker free” and generally concluded that for most phosphors this required a refresh rate of about 40 frames per second. An interesting aspect of this position is that most manufacturers claimed that 30 frames per second was sufficient. My personal experience was that at 30 frames per second the flicker was generally intolerable.

- Machover explained how most commercial units contained a display generator that could produce points, lines, alphanumeric characters and circles from a basic display list stored in either the computer’s memory or a memory contained within the display device itself. Most systems contained information in the display list that defined character size, vertical or horizontal orientation, line brightness, and line type such as solid, dots, dashes or dot/dash. He mentioned that the emergence of lowcost minicomputers would lead to the display systems incorporating these computers.

- Perhaps the most useful part of the paper was a discussion concerning the volume of data that could be displayed, considering the minimum acceptable frame rate. This was determined by the time it took for the display beam to move to a new position, the time to draw a vector and how well the data was organized so that beam movement between vectors and/or characters was minimized.[18]

Image processing and surface geometry

At this early stage of graphics technology development, most developers were still struggling with being able to effectively work with two-dimensional line drawings. A number of interesting projects were underway at academic institutions such as MIT, Syracuse University and the University of Utah in creating three-dimensional models involving complex surfaces and in generating shaded images of these models. Research was also underway defining efficient methods for creating displays with hidden lines removed.

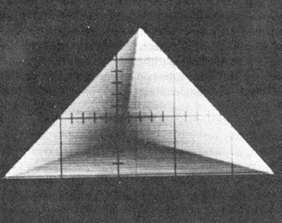

Some of the more interesting work was being done at the University of Utah where David Evans was developing techniques for creating shaded images on monochromatic displays.[19] The others working on this project were Chris Wylie, Gordon Romney and Alan Erdahl. Whether or not they were the first to use the technique, they became strong proponents of dividing surfaces into small triangles. “Any developable surface can be approximated arbitrarily accurately with small, but finite, triangles.”[20]

I remember visiting Evans around this time and being impressed by the work they were doing at Utah. The software was written in FORTRAN IV and called PIXURE. Both a cube made up of 12 triangles and a tetrahedron made up of four triangles took about 25 seconds to process on a UNIVAC 1108 at 512 by 512 resolution. Although this was a large mainframe by 1967 standards, it only had about 1.3 MIPS of processing power. PIXURE required about 14K words (36-bit ) for storing a picture with 100 triangles. This was a serious limitation since the maximum memory for a UNIVAC 1108 was just 256K words and the typical machine had much less.

One of the objectives of the research team was to develop algorithms whose processing time expanded linearly with the target resolution of the image being generated and with the number of triangles in the image. A problem with far too many researchers is that they get mentally locked in to the performance restrictions of the computer hardware currently being used. The Utah group had the ability to see that future computers would provide far greater performance. According to the Utah team: “The parallel and incremental characteristics of the algorithm lead us to believe that real-time movement and display of half-tone images is very near realization.”[21]

An additional point was that the effectiveness of the algorithms they had developed depended to a great extent on the ability of software to convert arbitrary surfaces into a suitable mesh of triangles. Figure 4.5 shows a convex tetrahedron displayed at 512 by 512 resolution.

About the same time that early visualization work was underway at the University of Utah, Arthur Appel was tackling similar problems at the IBM Research Center in Yorktown Heights, N.Y. He experimented with using ray tracing techniques to create shaded images. Since color displays were still off in the future and contemporary refresh displays had limited ability to vary spot intensity, Appel utilized a technique called “chiaroscuro” with which artists and illustrators used light and shade to achieve a three dimensional effect.

The work done at Utah required that the source of illumination be at the viewpoint and since it was a single point light source, no shadows could be generated. Appel’s ray tracing techniques allowed the light source to be placed in an arbitrary location enabling the software to create shadows. The images his software generated consisted of multiple plus signs. Shading was accomplished by varying the size of the plus signs as well as their spacing. Generating a shaded image of a rather simple part took about 30 minutes on an IBM 7094 computer. The images were then plotted on a CalComp plotter. While this was not a practical graphics application, it did lay the foundation for more effective approaches that would follow. See Figure 4.6 for an example of Appel’s work.

In a paper presented at the 1968 Spring Joint Computer Conference Appel made a very prescient comment. “If techniques for the automatic determination of chiaroscuro with good resolution should prove to be competitive with line drawings, and this is a possibility, machine generated photographs might replace line drawings as the principal mode of graphical communication in engineering and architecture.”[23] It might have taken over 30 years for this to have occurred, but in many cases today, color shaded images of mechanical products, buildings and process plants are used as the primary means of exchanging design information between relevant parties.

Data management and application programming developments

As graphics hardware and computer technology began to mature in the mid-1960s it became increasingly obvious that new techniques had to be developed for storing and manipulating engineering design data. As described in the prior chapter, Doug Ross had made an important contribution with his “plex” architecture concept. A number of researchers associated with both academic institutions and commercial companies went to work on defining extensions to Ross’ original concept in attempts to make the development of interactive systems more efficient. For the most part, this work was constrained by contemporary hardware and communications equipment. One of the most significant limitations was the small amount computer memory installed in most computers due to cost considerations. Remote communications had an upper limit of about 2,400 bits per second.

Ivan Sutherland’s initial graphics work at Lincoln Laboratory soon led to a much broader series of research projects, attracting some of the best talent associated with the development of computer graphics. Larry Roberts wrote several technical papers that helped define the theoretical foundation for managing display files and the matrix mathematics that formed the basis for much future work.[24] He worked with William R. (Bert) Sutherland, Ivan’s older brother, in 1964 to develop programming tools that would facilitate the implementation of graphic applications on Lincoln Laboratory’s TX-2 computer. CORAL (Class Oriented Ring Associative Language) was a service system consisting of a basic data structure, a collection of subroutines that would manipulate this data structure and a macro language for defining the data structure and the operations to be performed on it.[25]

Andries Van Dam and David Evans, while working at Brown University and the University of Pennsylvania respectively, developed a compact data architecture called PENCIL (Pictorial ENCodIng Language) that minimized the extent with which pointers were used. PENCIL supported the easy addition and deletion of sub-pictures, no entity identification number was required for browsing through a file and the overhead per subpicture was low. The key aspect of their work was that it served as a foundation for other research in graphical data management.[26]

Around 1967, Harvard University joined the growing number of academic institutions working on interactive graphics technology. One of the first people to join its staff in this area was William Newman who had earned his Ph.D. at the University of London and come to the United States a year or so earlier and initially worked at Adams Associates in Bedford, Massachusetts. Newman would go on to write one of the most widely read books on the subject of computer graphics with Robert Sproull, “Principals of Interactive Computer Graphics.”[27]

In a paper presented at the 1968 Spring Joint Computer Conference in Atlantic City, New Jersey, Newman described some of the work then underway at Harvard in developing problem-oriented programming languages for graphic applications. The basic principal was fairly straightforward – for every action taken by a user, there was a specific reaction executed by the computer depending upon the current state of the program. Newman felt that any language used to develop graphic programs needed to be as simple as possible if it were to be used by a wide range of programmers. The software developed at Harvard, the Reaction Handler, met these criteria. In his paper, Newman contrasted the Reaction Handler to the ICES System developed by Dan Roos at MIT which he felt was not applicable to interactive tasks and the AED System being developed by Doug Ross at MIT’s Project MAC.[28] The latter was a very generalized language which had attractive features but probably was overly sophisticated for commercial applications.[29]

Perhaps the most comprehensive paper describing the requirements for a graphics system that was published during this period appeared in the proceedings of the 1968 Fall Joint Computer Conference. Written by Ira Cotton of Sperry Rand Corporation and Frank Greatorex of Adams Associates, it described a system capable of supporting remote graphics terminals being implemented on a UNIVAC 1108 computer. The graphics stations consisted of UNIVAC 1557 Display Controllers and UNIVAC 1558 Display Consoles. The authors defined their basic objective of providing the fastest possible response for console operators while at the same time minimizing the load placed on the host computer.30

Cotton and Greatorex’s work required a careful analysis of which functions should be handled by the remote consoles and which should be handled by the host computer. The database they proposed extensively utilized ring chaining as developed by Bert Sutherland at Lincoln Laboratory. There was also significant emphasis on making the system as hardware independent as possible. If the details of the remote consoles changed, that was not supposed to affect the host software. This was a laudable goal that many organizations would attempt to meet in subsequent years but few would accomplish until the personal computer and Windows became industry standards in the 1990s.

Like other systems being developed at that time, they had to contend with communications links that had a maximum speed of 2,400 bits per second. As a consequence, data transferred from the host to the remote consoles were compressed to the maximum extent possible. Data describing conic entities were transferred in a parametric format and then expanded at the remote terminal into actual displayable elements. The team working on this project included R. Ladson, N. Fritchie and G. Halliday of UNIVAC and Dan Cohen and Roger Baust of Adams Associates. Much of the research work going on a Lincoln Laboratory at the time was being done under contract by Adams Associate personnel.

Displaying complex images

As noted in several places in this narrative, the computer systems available during the mid to late-1960s were either relatively expensive or if more affordable, had limited computational performance. According to the October 1966 issue of the Adams Associates Computer Characteristics Quarterly, the rental cost for an IBM 360 Model 65 or Model 67 computer started at $34,000 per month and could go up to $100,000 per month. This was for a machine with an add time of 1.3 microseconds and a maximum internal memory of 1MB. Lower cost minicomputers that could support interactive graphics were starting to become available but a Scientific Data Systems 930 which leased for $2,650 per month had an add time of 3.5 microseconds and a maximum memory of 32K 24-bit words.[30]

Generating complex curves on a display terminal required either a considerable number of instruction executions if it were to be done each time an image was refreshed or a considerable amount of memory to store a large number of short vectors if it were to be done once and the data saved in memory. Therefore, a number of researchers explored different means to display these curves more efficiently. A substantial amount of work was done throughout the 1960s at Lincoln Laboratory by Tim Johnson, Larry Roberts, John Ward, Charles Seitz and Howard Blatt including the building of experimental hardware for generating conic curves independent of the host computer.[31][32]

During the late 1960s Harvard University also became a hotbed of graphics research with people such as Ivan Sutherland, Robert Sproull, Dan Cohen, Ted Lee and Robin Forrest leading some of the more significant work. One project Sutherland and Sproull collaborated on was the implementation of a fast method for displaying portions of two and three-dimensional images on a CRT screen. Usually referred to as widowing, it is a computational intensive task when done using brute force methods. Sutherland and Sproull, working with Cohen and Lee, both of whom were Ph.D. candidates at Harvard, came up with a technique for finding elements falling within a window by calculating successive midpoints of a line. This method was computationally far less extensive than prior techniques.

Called a clipping divider, the algorithm they defined was subsequently implemented in hardware. This was an independent device that logically sat between the computer and the display terminal. The computer would feed it raw graphic elements and the clipping divider would determine what portion, if any, of the element fit within the display window, would calculate its translated coordinate values and then send those values to the display terminals where it would be stored in the units display memory.

The Harvard team built a matrix multiplier unit to facilitate the three-dimensional transformations needed for the translation of curved geometry. They were also exploring combining new hidden line removal algorithms developed at the University of Utah by John Warnock (who would go on to form Adobe Systems a few years later) with the clipping divider but it appears that this was never completed, at least not at Harvard. Before 1968 was over, Sutherland moved on to the University of Utah and Sproull to

Stanford University.[33] A few years later Sutherland was one of the founders along with David Evans of Evans & Sutherland in Salt Lake City. That company used a version of the clipping divider in its early graphic systems.

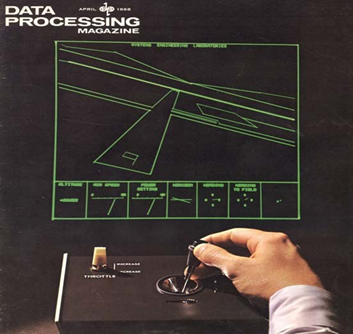

There were a number of other very bright people working at this time on applying advanced mathematical techniques to the display of complex geometric curves and surfaces. Possibly one of the brightest was Cohen who moved to Harvard University after working at Adams Associates. While at Adams Associates, Cohen worked with Frank Greatorex to program a Systems Engineering Laboratory minicomputer to simulate aircraft flight operations. I do not know if this was the first graphics flight simulator, but it was definitely one of the early such programs. SEL had hired Adams Associates to program this application so it could be used to demonstrate their hardware at trade shows. It was first used at the 1967 Spring Joint Computer Conference and was one of the hits of the show.[34]

At Harvard’s Aiken Computation Laboratory, Cohen and Lee teamed up to explore mathematical procedures for displaying generalized curves. The work they did is far too sophisticated for a detailed description to be included in this book but suffice it to say that they pushed forward the state of the underlying principals guiding future graphics technology developments.[36]

In-House CAD developments gain momentum

The major difference between research laboratory developments, whether done in an academic or industrial environment, and development done in a commercial environment is that the latter activity is expected to produce usable results within a reasonable period of time. A good example is the work done in the mid-1960s at Lockheed-Georgia Company. This work was briefly covered in the Wall Street Journal article mentioned earlier. A more comprehensive description of the effort underway in the mid to late 1960s at Lockheed-Georgia is contained in a paper presented by S. H. Chasen at the 1965 Fall Joint Computer Conference in Las Vegas and in a book, Interactive Graphics for Computer-Aided Design by M. David Prince, published in 1971.37

Lockheed-Georgia installed a UNIVAC 418 computer with a Digital Type 340 display in 1963. The UNIVAC 418 was a relatively new 18-bit machine with a four microsecond add time and hardware floating point. Since there was little existing interactive graphics software that could be utilized for this system, the Lockheed-Georgia programmers had to develop the software from scratch. It appears from Chasen’s paper and Prince’s book that they closely followed other graphics projects such as Sutherland’s Sketchpad and the work Ross and Coons were doing in association with MIT’s Project MAC.

The Lockheed system used a light pen as did most graphics projects of that era along with a 28-button function box. The purpose of each button was specific to the application currently being used. The software was capable of creating and editing threedimensional models which could be viewed in a traditional three orthogonal and one perspective view format. Isometric views were also supported using a separate program. The creation and editing capabilities for working in three-dimension space were fairly basic – points, lines circular arcs, rotation about an axis perpendicular to the view, and scale change. When functioning in a two-dimensional mode, additional graphics capabilities such as constructing a circle tangent to two circles were available. Given the constraints of the Type 340 display, the models and images that could be handled were quite limited.

The company had a team of about 20 programmers working on the project. There was a long-range group working on basic graphics functionality and a near-term group working on applications that were intended to be operational in 1965. The latter group was working on two projects, the ability to mathematically define aircraft surfaces (technology which is still evolving today) and the ability to produce NC control tapes for two-dimensional milling machines.

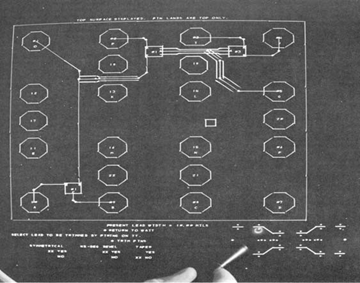

While Lockheed-Georgia was using APT for part programming, the graphics development team believed that they could improve the process using interactive graphics. The initial software for doing so was fairly basic. Once the geometry of the part was defined, the user would indicate the path the milling machine should take segment by segment as shown in Figure 4.8. The first production part was a rudder control pulley for the C-141 aircraft which was produced on the Univac 418 in 1965. This may well have been the first part programmed using computer graphics in the aerospace industry.

The program for generating tool paths was called PATH. The user was able to define the starting location of the tool and the depth of the cut along with the radius of the tool. With that information, each surface to be machined was selected by the user and the information necessary to direct the tool would be calculated by the software, bypassing the APT system then being used.

Chasen was very aware even at this early stage in the development of CAD/CAM systems that the technology would have a significant impact on how engineering design and manufacturing would be practiced in the future. “For example, current design practices require a sequence of relatively autonomous operations….With computer-aided design, the team concept may be altered considerably.”[37] Truer words have rarely been spoken in this industry.

Of particular interest is Chasen’s comments on the relationship between aerospace firms and computer manufacturers in regards to the development of interactive graphics systems. He felt that the computer manufacturers should concentrate on developing hardware and leave the development of graphics applications to the likes of Lockheed-Georgia. “Though Lockheed-Georgia may use some of the manufacturer’s software features when they become available, we believe that the creation of our own program system for our own applications offers the greatest flexibility and, therefore, the greatest success in long term operations.”[39]

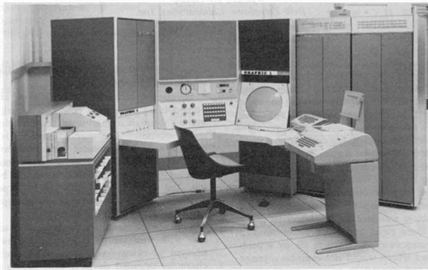

The work Chasen described showed that a computer dedicated to a single display console was frequently idle between user requests for action. The company felt that a single computer could therefore handle a number of such consoles. In the fall of 1965 Lockheed-Georgia placed an order with Control Data Corporation for a CDC 3300-based system with three 22-inch Digigraphics display consoles. The CDC 3300 was a fairly fast computer with a 2.75 microsecond add time and a 32K word main memory – each word 18 bits in length. The Digigraphics displays were refreshed from a six-track drum storage device which rotated every 33 milliseconds. Each track stored 10,000 words which resulted in the system’s ability to display far more complex drawings than the DEC Type 340 used previously. A second two-terminal system was installed to support research work at Lockheed-Georgia.

As described by James Kennedy in a paper presented at the 1966 Fall Joint Computer Conference in San Francisco, Lockheed-Georgia stripped out part of the software Chasen described earlier and used it as the basis for a two-dimensional drawing system.[40] Most of Kennedy’s paper dealt with enhancements Lockheed-Georgia made to the CDC operating system to provide an improved time-sharing environment rather than describing the applications the system was applied to. The resulting software, the Graphic Time Shared System (GTSS), is described in greater depth in Prince’s book.

The key application was the design of parts that were subsequently produced using NC machine tools. According to Prince, critically needed parts could be turned around in about 24 hours as compared to a week or more using APT. Over 50 parts were designed and programmed using this system for the C-5A then being built by Lockheed.

The CDC system was supplemented by an IBM System 360/50 computer with three 2250 display terminals in 1968. It is interesting to note that there seems to have been very little coordination between Lockheed-Georgia and Lockheed-California during this period. The Lockheed-California CADAM software is discussed in depth in Chapter 13.

IBM Develops hybrid circuit design system

Although this book focuses on mechanical and to a lesser extent on AEC applications, the work done at IBM on developing a hybrid circuit design system in the mid-1960s is important because it is a good example of IBM’s focus on user interaction issues that eventually became important in its work with Lockheed-California and CADAM. IBM System 360 computers were constructed primarily of small hybrid integrated circuits about a half inch square. Each circuit typically consisted of several discrete transistors and/or diodes, several resistors and the interconnecting circuitry. The manufacturing process for these modules involved a number of steps that required producing several graphical layout patterns or masks.

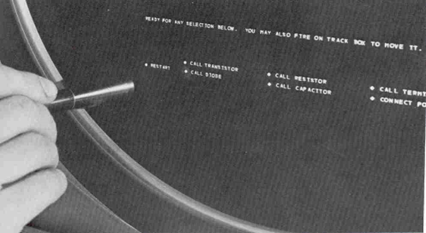

The system developed by IBM at its Hopewell Junction, New York facility did not have a specific name associated with it – perhaps one reason why the company’s pioneering work was not been more widely recognized in subsequent years. The hardware configuration for this system consisted of a relatively slow IBM 1620 Mod II computer which had an add time for a pair of five-digit numbers of 140 microseconds, two IBM 1311 disk drives, each of which stored 2 MB of data and a 19-inch display with function keys and a light pen. The display had its own memory and was able to display 1,023 straight line segments, about 5,000 characters or a combination thereof. Hard copy was produced on 29-inch incremental plotter.[41]

The user interacted with the circuit design and layout software by pointing the light pen at “light buttons” displayed on the bottom of the display surface as shown in Figure 4.9. The process proceeded in two major steps. The first phase was to define a circuit schematic. With earlier electronic design software, this step had been done by reading data entered on punch cards. Interactive circuit design used the light pen to select circuit components, place them in a logical arrangement and then define the connections. Values for the different circuit elements were then entered manually using the console keyboard. This was followed by using a program running on the 1620 to calculate the required size of each resistor, a key step in designing a hybrid circuit.

The second phase of the design process was to create the physical layout of the hybrid circuit itself. The software created a component list from the schematic data and displayed the list on the screen along with a blank module substrate. The user then selected items from this list and placed them on the substrate blank. The software ensured that all the items in the component list were placed on the substrate and that the operator did not add anything that was not defined in the schematic diagram. As each component was placed by the operator, it was removed from the list, clearly indicating to the operator those item left to be placed.

As the components were placed, the software generated point-to-point connections between components as defined by the schematic diagram. The operator could then clean up the interconnections by inserting break or bending points in the lines. These lines could be displayed as single line interconnections or the actual width of the lines could be displayed as illustrated in Figure 4.10. Once the layout was completed the data could be preserved on punch cards or the artwork for the circuit masks plotted on the attached plotter.

According to Koford, “…the development of the system described in this paper has shown beyond a doubt that the use of graphic data processing techniques can result in significant improvements in both the ease and the speed with which integrated circuit artwork may be produced.”[43] As the electronics industry moved from using hybrid circuit modules to more complex integrated circuits, the use of computer graphics for producing production artwork would grow rapidly.

By the early 1970s, artwork production systems for integrated circuits and printed circuit boards would be a major source of income for Calma, Computervision, Gerber and Applicon as described in subsequent chapters. For the most part, these systems focused predominately on the geometric artwork portion of the task and it would be another decade before companies such as Mentor Graphics and Cadence began to link schematic design with artwork production into a single system. The technique of defining a schematic layout and then designing the physical implementation of that layout using the data in the schematic layout to verify the physical design was extensively applied in process plant design by Intergraph and others.

General Motors defines graphic requirements

In a paper presented at the 1967 Fall Joint Computer Conference in Anaheim,

California, John Joyce and Marilyn Cianciolo of the General Motors Research Center in Warren, Michigan provided an excellent summary of the desirable characteristics of interactive graphic systems. Although not limited to engineering design applications, their comments concisely described the key features a CAD system should have with a special focus on user interface issues. This work was based on experiments conducted at the research center using three different hardware configurations – the original DAC-I system described in the previous chapter followed by an IBM 360/50 with 2250-I displays and an IBM 360/67 with 2250-III displays. It should be noted that the authors comments were predicated upon the use of stroke refresh displays and light pens for user interaction.

Many of the statements made by Joyce and Cianciolo would eventually become features of commercial CAD systems including those using storage tube graphics and tablets. Some of their key points were:[45]

- Typical users will have little computer experience.

- Systems must be implemented such that users can do productive work for several hours per day.

- Fast response time is a key factor in implementing an effective application.

- The use of alphanumeric data to drive these systems should be minimized.

- Many errors can be eliminated by allowing just syntactically correct data to be selected.

- Data of similar types should be able to be grouped together. Although not mentioned in this paper, the concept eventually led to use of layers in most CAD systems.

- Selective brightening of data is a powerful feedback mechanism. Shading would be useful for user understanding of three-dimensional surfaces. Blinking of displayed entities was also discussed as a tool to attract the user attention.

- Graphical communication systems must be natural and convenient to use.

- The number of steps required to accomplish a particular task should be minimized.

- Application programmers should be able to selectively permit or disable the use of different input devices.

- The display controller should be able to identify a selected graphical entity without requiring the host computer to perform extensive database searches.

- No artificial restraints should be placed upon the amount of data displayed other than the physical limitations of the hardware.

Description of databases assembled in ring structures closely followed the work of Ross and Rodriquez described in the previous chapter. The capabilities described in this paper were implemented at GM in a series of subroutines written in PL/1. Applications could be implemented by calling these subroutines for maintaining displayed images and interactive graphic tasks such as light pen selection of entities.